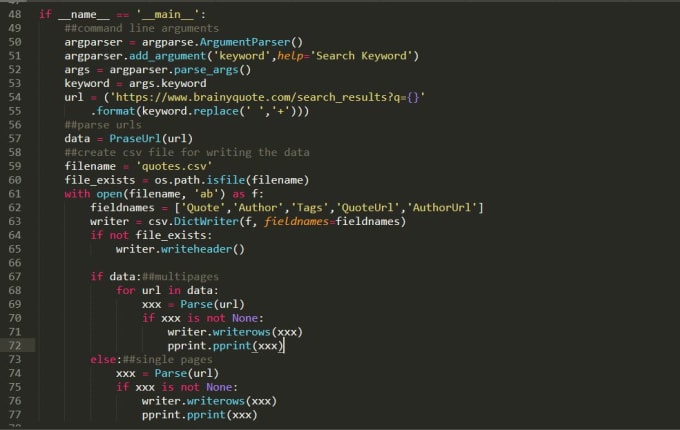

Create webscraper with python6/6/2023 Column Headers: Including column headers in your CSV file can make it easier to understand and analyze your data.Most software applications can easily read a comma, making it the most widely used delimiter. Delimiter: Using a comma as a delimiter in your CSV file can affect the readability and efficiency of your data.The most commonly used encoding for CSV files is UTF-8, which can handle a wide range of characters. Encoding: It is important to choose the correct encoding for your CSV file, especially if you are working with non-English characters.You should follow certain best practices to ensure that you save your data correctly and efficiently. Saving web scraped data to a CSV file in Python can be a crucial step in your data analysis process. Best Practices for Saving Web Scraping Data to CSV

This will Save the First Row of the table into our CSV file i.e Text-Editor-Data.csv. Table = bs.findAll('table', )ĬsvFile = open('Text-Editor-Data.csv', 'wt+') Let’s take an example that scrapes the title and URL of the top 10 Google search results for a given query and saves the results to a CSV file: To save scraped data to a CSV file in Python, we’ll use the built-in CSV module. Now that we know how to scrape a website with Python, let’s look at how to save the scraped data to a CSV file.ĬSV files are a common format for storing tabular data, and they can be easily read and manipulated with a spreadsheet program like Microsoft Excel or Google Sheets. The response is then parsed with Beautiful Soup, and the title of the website is extracted from the HTML document. In this example, we’re using the Requests library to send an HTTP GET request to the website. Soup = BeautifulSoup(response.text, 'html.parser') To scrape a website with Beautiful Soup, you’ll need to send an HTTP request to the website and parse the HTML response. Once you’ve installed these libraries, you’re ready to start scraping websites. To install Beautiful Soup and Requests, open a terminal or command prompt and enter the following commands: pip install beautifulsoup4 You can install both libraries using pip, which is a package manager for Python. It makes it easy to send requests and handle responses. Requests is a library for sending HTTP requests in Python. It provides a simple API for navigating and searching the document tree. The most popular libraries for web scraping in Python are Beautiful Soup and Requests.īeautiful Soup is a Python library for parsing HTML and XML documents.

To get started with web scraping in Python, you’ll need to install a few libraries.

Installing libraries for Web Scraping in Python In this blog, we will be using BeautifulSoup for web scraping and Pandas for saving the data to a CSV file. Python provides several libraries for web scraping, such as BeautifulSoup, Scrapy, and Selenium. It is a file format used to store data in a tabular form.Įach row in a CSV file represents a record, and each column represents a field.ĬSV files are commonly used for storing and exchanging data between different software applications.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed